Disruption and the Rewriting of the World

Disruptions rarely arrive with a clear declaration of intent. They begin as faint tremors beneath the surface of established order, unsettling assumptions before revealing their transformative force. History offers many such moments—the printing press, the steam engine, electricity, the internet. Each altered the structure of society, redistributing power, reshaping economies, and redefining how humans relate to knowledge and to one another.

Artificial Intelligence belongs unmistakably to this lineage, yet it differs in one profound respect. Earlier disruptions transformed the conditions of human life; AI has begun to transform the conditions of human thought. It does not merely extend our reach into the world—it reaches back into the very processes by which we perceive, decide, and understand.

At its core, AI is an architecture of learning from data—vast, accumulated reservoirs of human language, behaviour, preferences, and knowledge. Through pattern recognition and probabilistic inference, it produces outputs that increasingly resemble human reasoning. This has enabled remarkable advances: faster medical diagnoses, more efficient systems of governance, accelerated scientific discovery, and an unprecedented democratisation of information, among many others.

Yet, embedded within this promise is a quiet shift—one that is easy to overlook.

In earlier eras, humans sought information. Today, information increasingly seeks us. Algorithms filter, prioritise, and present content in ways that shape our attention. AI deepens this phenomenon. It does not simply retrieve knowledge; it interprets, summarises, and, in subtle ways, frames reality.

The question, therefore, is no longer limited to access. It extends to mediation: who or what stands between us and the world we believe we are seeing?

The reflections of Yuval Noah Harari acquire particular relevance here. He suggests that power in the contemporary world may increasingly derive from control over data—its collection, curation, and application. If this is so, AI represents not merely a technological advancement, but a reconfiguration of power itself.

This reconfiguration is neither inherently benign nor malign. It depends on how systems are designed, governed, and distributed. Transparent, accountable frameworks can harness AI for public good; opaque, concentrated systems may amplify asymmetries of power.

There is also a subtler transformation underway—one that touches human cognition. As AI systems become more capable, there is a tendency, often unconscious, to defer to them. Recommendations become decisions; suggestions become conclusions. The efficiency they offer can gradually evolve into dependence.

This is not a failure of technology; it is a feature of convenience. Yet, it raises an important question: if cognition is increasingly externalised—delegated to systems that operate beyond immediate human comprehension—what happens to the habits of independent thought?

The risk is not that we will cease to think, but that we may begin to think less actively—relying on systems that anticipate and respond before reflection fully unfolds.

Disruptions, as history shows, do not determine outcomes; they expand possibilities. The printing press enabled both enlightenment and propaganda. The internet connected humanity while fragmenting attention. AI, too, will most likely unfold across a spectrum shaped by human choices.

The challenge, therefore, is not to resist disruption, but to remain conscious within it—to ensure that the expansion of intelligence does not come at the cost of autonomy.

For in rewriting the world, AI is also, quietly, rewriting the way we engage with it—and, perhaps, the way we understand ourselves.

(Uday Kumar Varma is an IAS officer. Retired as Secretary, Ministry of Information & Broadcasting)

Uday Kumar Varma

Uday Kumar Varma

.jpg)

Related Items

A light-Hearted Reflection of An Average Man on IWD

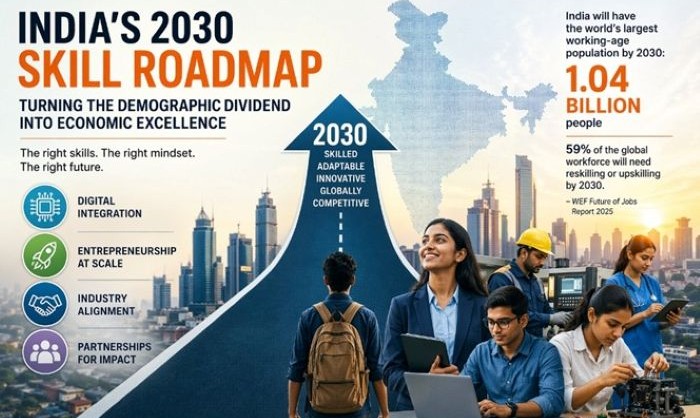

Our relevance to global talent skill market is only going to grow: EAM

The Soul of the Pen: A reflection by Krishan Gopal Sharma